Back in January we published an article about the principles of EAT used by Google when assessing the quality and relevancy of websites for search. This month we delve further into the review process that Google is continually using to assess and improve search results quality, and how they use a panel of Search Raters to help with feedback, based on a set of key guidelines.

Despite Google now using machine learning to analyse search results and interactions to improve future ranking performance, they are also constantly experimenting with new ideas to improve the way that search results are generated and displayed. One of the ways they do this is by getting feedback from real people, who are known as Search Quality Raters.

These Quality Raters are spread out all over the world and are highly trained using Google’s extensive guidelines. They are given tasks to assess, which are usually based on a set of search results for a query which show two alternative sets of results (existing and new based on a change in the search algorithms). They then provide feedback based on the guidelines which then helps Google’s engineers understand which changes make the search results more useful.

These Quality Raters are spread out all over the world and are highly trained using Google’s extensive guidelines. They are given tasks to assess, which are usually based on a set of search results for a query which show two alternative sets of results (existing and new based on a change in the search algorithms). They then provide feedback based on the guidelines which then helps Google’s engineers understand which changes make the search results more useful.

The search engineers at Google are continually looking at ways to make the search results more relevant and useful. They will often have ideas of changes that could be made and then have to implement an extensive testing and rigorous evaluation process to analyse metrics and decide whether to implement a proposed change. This data goes through a thorough review by experienced engineers and search analysts, as well as other legal and privacy experts, who then determine if the change is approved to launch.

In 2020, Google reports that they implemented over 600,000 experiments that resulted in more than 4,500 improvements to Search. These experiments included:

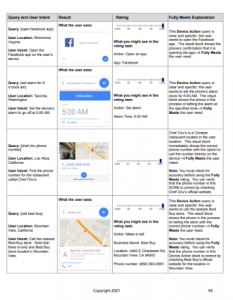

383,605 Search quality tests – using the Search Quality Raters to measure the quality of search results on an ongoing basis, these raters assess how well a website gives people what they are looking for, and evaluate the quality of results based on the EAT principles (expertise, authoritativeness and trustworthiness of the content). To ensure a consistent approach, Google has the Search Quality Rater Guidelines, which is a document of more than 170 pages to help provide guidance and examples for appropriate ratings. These guidelines are publicly available to see how sites may be assessed.

62,937 Side-by-side experiments – by constantly improving their algorithms to return better search results, Google uses the panel of Search Quality Raters in the launch process. Using side-by-side experiments, the Raters review two different sets of search results: one with the proposed change already implemented and one without. Google asks the Raters which results they prefer and why.

17,523 Live traffic experiments – In addition to the Search quality tests, Google conducts live traffic experiments to see how real people interact with a feature, before launching it to everyone. These features are shown to just a small percentage of people, usually starting at 0.1%, and then after enough data is collected, Google compares the experiment group to a control group to analyse data such as what people click on, how many queries were done, were queries abandoned, how long did it take for people to click on a result, and so on. These results are used to measure whether engagement with the new feature is positive, and to ensure that the changes Google makes are increasing the relevance and usefulness of our results for everyone.

3,620 Launches – Every proposed change to Google’s search results goes through a review by their most experienced engineers and data scientists, who carefully review the data from all the different experiments to decide if the change is approved to launch. Many proposed changes never go live, unless it can be shown that the change actually makes things better for people.

You can get more of a background to this process in Google’s ‘home movie’ in YouTube that we featured in an article last December.

And as ever, if you have any questions about search – and the impact of these developments on SEO – please contact us for a discussion as we have been leading SEO specialists for more than 20 years.